-

万字长文 | 面向k8s编程,如何写一个Operator

云和安全管理服务专家新钛云服 刘川川翻译

概述

随着我们对 Kubernetes 的逐步了解,可能就会发现 Kubernetes 中内置的对象定义,比如 Deployment、StatefulSet、ConfigMap,可能已经不能满足我们的需求。我们希望在 Kubernetes 定义一些自己的对象,一是可以通过 kube-apiserver 提供统一的访问入口,二是可以像其他内置对象一样,通过 kubectl 命令管理这些自定义的对象。

Kubernetes 中提供了这种自定义对象的方式,其中之一就是 CRD。

CRD 介绍

CRD(CustomResourceDefinitions)在 v1.7 刚引入进来的时候,其实是 ThirdPartyResources(TPR)的升级版本,而 TPR 在 v1.8 的版本被剔除了。CRD 目前使用非常广泛,各个周边项目都在使用它,比如 Ingress、Rancher 等。

我们来看一下官方提供的一个例子,通过如下的 YAML 文件,我们可以创建一个 API:

apiVersion: apiextensions.k8s.io/v1 kind: CustomResourceDefinition metadata: # 名字须与下面的 spec 字段匹配,并且格式为 '<名称的复数形式>.<组名>' name: crontabs.stable.example.com spec: # 组名称,用于 REST API: /apis/<组>/<版本> group: stable.example.com # 列举此 CustomResourceDefinition 所支持的版本 versions: - name: v1 # 每个版本都可以通过 served 标志来独立启用或禁止 served: true # 其中一个且只有一个版本必需被标记为存储版本 storage: true schema: openAPIV3Schema: type: object properties: spec: type: object properties: cronSpec: type: string image: type: string replicas: type: integer # 可以是 Namespaced 或 Cluster scope: Namespaced names: # 名称的复数形式,用于 URL:/apis/<组>/<版本>/<名称的复数形式> plural: crontabs # 名称的单数形式,作为命令行使用时和显示时的别名 singular: crontab # kind 通常是单数形式的驼峰编码(CamelCased)形式。我们的资源清单会使用这一形式。 kind: CronTab # shortNames 允许我们在命令行使用较短的字符串来匹配资源 shortNames: - ct这样我们就可以像创建其他对象一样,通过 kubectl create 命令创建。创建完成以后,一个类型为 CronTab 的对象就在 kube-apiserver 中注册好了,我们可以通过如下的 REST 接口访问,比如查看命名空间 ns1 下的 CronTab 对象,可以通过这个 URL “/apis/stable.example.com/v1/namespaces/ns1/crontabs/” 访问。这种接口跟 Kubernetes 内置的其他对象的接口风格是一模一样的。

声明好了 CronTab,我们就来看看如何创建一个 CronTab 类型的对象。下面依然是来自官方的一个例子:

apiVersion: "stable.example.com/v1" kind: CronTab metadata: name: new-cron-object spec: cronSpec: "* * * * */5" image: awesome-cron-image通过 kubectl create 创建 new-cron-object 后,就可以通过 kubectl get 查看,并使用 kubectl 管理这个 CronTab 对象了。例如:

kubectl get crontab NAME AGE new-cron-object 6s这里的资源名是大小写不敏感的,我们在这里可以使用缩写 kubectl get ct,也可以使用 kubectl get crontabs。同时原生内置的 API 对象一样,这些 CRD 不仅可以通过 kubectl 来创建、查看、修改,删除等操作,还可以给其配置 RBAC 规则。

我们还可以开发自定义的控制器,来感知和操作这些自定义的 API。接下来我们就开始介绍。可以参考 定制资源 | Kubernetes(https://kubernetes.io/zh/docs/concepts/extend-kubernetes/api-extension/custom-resources/#我是否应该向我的-kubernetes-集群添加定制资源) 这份说明确定是否需要在 Kubernetes 中定义 API,还是让我们的 API 独立运行。

什么是 Kubernetes Operator

我们可能对 Operator 这个名字比较陌生。这个名字最早由 CoreOS(https://coreos.com/operators/) 在 2016 年提出来,我们来看看他们给出的定义:

An operator is a method of packaging, deploying and managing a Kubernetes application. A Kubernetes application is an application that is both deployed on Kubernetes and managed using the Kubernetes APIs and kubectl tooling.

To be able to make the most of Kubernetes, you need a set of cohensive APIs to extend in order to service and manage your applications that run on Kubernetes. You can think of Operators as the runtime that manages this type of application on Kubernetes.

简单概括一下,所谓的 Kubernetes Operator 其实就是借助 Kubernetes 的控制器模式,配合一些自定义的 API,完成对某一类应用的操作,比如资源创建、变更、删除等操作。

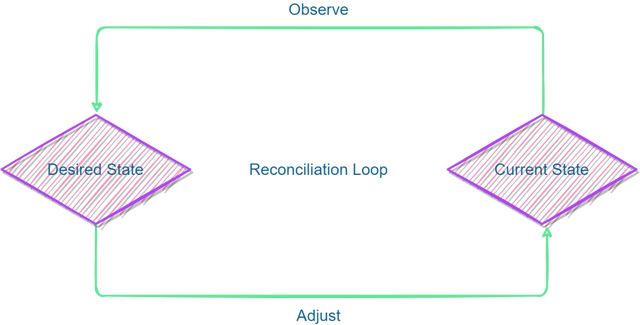

这里对 Kubernetes 的控制器模式做个简要说明。Kubernetes 通过声明式 API 来定义对象,各个控制器负责实时查看对应对象的状态,确保达到定义的期望状态。这就是 Kubernetes 的控制器模式。

kube-controller-manager 就是由这样一组控制器组成的。我们以 StatefulSet 为例来简单说明下控制器的具体逻辑。

假设我们声明了一个 StatefulSet,并将其副本数设置为 3。kube-controller-manager 中以 goroutine 方式运行的 StatefulSet 控制器在观察 kube-apiserver 的时候,发现了这个新创建的对象,它会先创建一个 index 为 0 的 Pod ,并实时观察这个 Pod 的状态,待其状态变为 Running 后,再创建 index 为 1 的 Pod。后续该控制器会一直观察并维护这些 Pod 的状态,保证 StatefulSet 的有效副本数始终为 3。

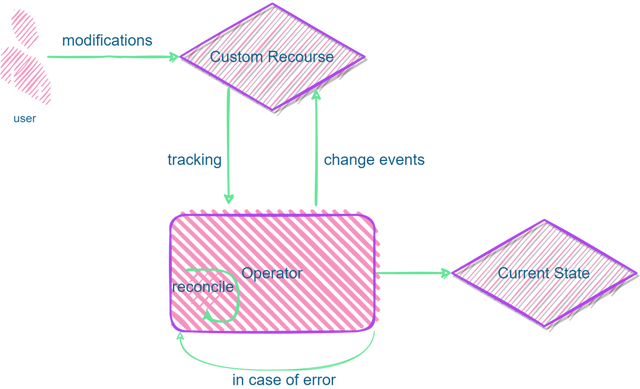

所以我们在声明完成 CRD 之后,也需要创建一个控制器,即 Operator,来完成对应的控制逻辑。在了解了 Operator 的概念和控制器模式后,我们来看看 Operator 是如何工作的。

Kubernetes Operator 是如何工作的

Operator 工作的时候采用上述的控制器模式,会持续地观察 Kubernetes 中的自定义对象,即 CR(Custom Resource)。我们通过 CRD 来定义一个对象,CR 则是 CRD 实例化的对象。

Operator 会持续跟踪这些 CR 的变化事件,比如 ADD、UPDATE、DELETE,然后采取一系列操作,使其达到期望的状态。上述的流程其实还是有些复杂的,尤其是对运维同学有一定的门槛。好在社区提供了一些脚手架,可以方便我们快速地构建自己的 Operator。

构建一个自己的 Kubernetes Operator

目前社区有一些可以用于创建 Kubernetes Operator 的开源项目,例如:Operator SDK(https://github.com/operator-framework/operator-sdk)、Kubebuilder(https://github.com/kubernetes-sigs/kubebuilder)、KUDO(https://github.com/kudobuilder/kudo)。我们这里以 Operator SDK 为例,接下来就安装 Operator SDK。

二进制安装 Operator SDK

前提条件

- curl(https://curl.haxx.se/)

- gpg(https://gnupg.org/) version 2.0+

- 版本信息请参考:kubernetes/client-go: Go client for Kubernetes(https://github.com/kubernetes/client-go#compatibility-matrix). (github.com)

1、下载二进制文件

设置平台信息:

[root@blog ~]# export ARCH=$(case $(uname -m) in x86_64) echo -n amd64 ;; aarch64) echo -n arm64 ;; *) echo -n $(uname -m) ;; esac) [root@blog ~]# export OS=$(uname | awk '{print tolower($0)}')下载指定的文件:

[root@blog ~]# export OPERATOR_SDK_DL_URL=https://github.com/operator-framework/operator-sdk/releases/download/v1.16.0 [root@blog ~]# curl -LO ${OPERATOR_SDK_DL_URL}/operator-sdk_${OS}_${ARCH}2、验证已下载的文件(可选)

从 keyserver.ubuntu.com 导入 operator-sdk 发行版的 GPG key :

[root@blog ~]# gpg --keyserver keyserver.ubuntu.com --recv-keys 052996E2A20B5C7E下载 checksums 文件及其签名,然后验证签名:

[root@blog ~]# curl -LO ${OPERATOR_SDK_DL_URL}/checksums.txt [root@blog ~]# curl -LO ${OPERATOR_SDK_DL_URL}/checksums.txt.asc [root@blog ~]# gpg -u "Operator SDK (release) <cncf-operator-sdk@cncf.io>" --verify checksums.txt.asc我们会看到一些类似下面的一些输出信息:

gpg: assuming signed data in 'checksums.txt' gpg: Signature made Fri 30 Oct 2020 12:15:15 PM PDT gpg: using RSA key ADE83605E945FA5A1BD8639C59E5B47624962185 gpg: Good signature from "Operator SDK (release) <cncf-operator-sdk@cncf.io>" [ultimate]确保 checksums 匹配:

[root@blog ~]# grep operator-sdk_${OS}_${ARCH} checksums.txt | sha256sum -c - operator-sdk_linux_amd64: OK确保类似下面的输出信息:

operator-sdk_linux_amd64: OK3、把二进制文件放到 PATH 下面

[root@blog ~]# chmod +x operator-sdk_${OS}_${ARCH} && sudo mv operator-sdk_${OS}_${ARCH} /usr/local/bin/operator-sdk源码编译安装 Operator SDK

前提条件

- git(https://git-scm.com/downloads)

- go version 1.16+

- 确保 GOPROXY 设置为 “https://goproxy.cn”

[root@blog ~]# export GO111MODULE=on [root@blog ~]# export GOPROXY=https://goproxy.cn [root@blog ~]# git clone https://github.com/operator-framework/operator-sdk [root@blog ~]# cd operator-sdk [root@blog operator-sdk]# make install验证版本:

[root@blog operator-sdk]# operator-sdk version operator-sdk version: "v1.16.0", commit: "560044140c4f3d88677e4ef2872931f5bb97f255", kubernetes version: "1.21", go version: "go1.16.13", GOOS: "linux", GOARCH: "amd64" # 由上述命令的输出来看,我们应该可以看出要使用的版本信息。 # 如我们使用的 operator-sdk 版本为:v1.16.0 # Go 的版本为:1.16.13 # Kubernetes 版本为:1.21 [root@blog operator-sdk]# go version go version go1.16.13 linux/amd64通过上述任何一种形式,就可以完成基础环境的搭建。接下来我们就创建一个 Operator。我们可以使用 Ansible、Helm 及 Go 结合 SDK 创建 Operator,使用 Ansible 及 Helm 的形式相对简单些。本文将使用 Go 的形式及 Operator SDK 来进行演示。

使用 Go 创建 Operator

Operator SDK 提供以下工作流来开发一个新的 Operator:

- 使用 SDK 创建一个新的 Operator 项目

- 通过添加自定义资源(CRD)定义新的资源 API

- 指定使用 SDK API 来 watch 的资源

- 定义 Operator 的协调(reconcile)逻辑

- 使用 Operator SDK 构建并生成 Operator 部署清单文件

前提条件

- 参照前面的介绍进行安装 operator-sdk。

- 要有 cluster-admin 权限。

- 一个可以访问的 Operator 镜像(例如 hub.docker.com、quay.io),并可以在命令行环境中登录。

- example.com 本例中在 Dockers Hub 上的一个命名空间。如果我们使用其他 registry 或命名空间的话,请相应的替换掉即可。

- 如果 registry 是私有的,请准备好相关的认证或证书。

接下来我们就会按照下面的流程创建一个工程:

- 如果不存在 Memcached Deployment 就创建一个

- 确保 Deployment 中的 size 与 Memcached CR 中的 size一致

- 使用带有 CR pod 名称的状态写入器更新 Memcached CR 的状态

创建工程

接下来使用命令行工具创建一个名为 memcached-operator 的工程:

[root@blog operator-sdk]# mkdir /root/memcached-operator [root@blog operator-sdk]# cd /root/memcached-operator [root@blog memcached-operator]# export GO111MODULE=on && export GOPROXY=https://goproxy.cn [root@blog memcached-operator]# operator-sdk init \ --domain example.com \--repo github.com/example/memcached-operator Writing kustomize manifests for you to edit... Writing scaffold for you to edit... Get controller runtime: $ go get sigs.k8s.io/controller-runtime@v0.10.0 Update dependencies: $ go mod tidy Next: define a resource with: $ operator-sdk create api创建完成之后,我们看一下代码的目录结构:

[root@blog memcached-operator]# tree -L 2 . ├── config │ ├── default │ ├── manager │ ├── manifests │ ├── prometheus │ ├── rbac │ └── scorecard ├── Dockerfile ├── go.mod ├── go.sum ├── hack │ └── boilerplate.go.txt ├── main.go ├── Makefile └── PROJECT 8 directories, 7 filesoperator-sdk init 生成了一个 go.mod 文件。当我们的工程不在 $GOPATH/src 下面,则 –repo=<path> 选项是必须的,因为脚手架需要一个有效的 module 路径。

在使用 SDK 前,我们要确保开启了模块支持。需要设置:export GO111MODULE=on。为了加速下载 Go 的依赖,需要设置合适的代理。如:export GOPROXY=https://goproxy.cn

此时,我们可以使用 go build 命令构建:

[root@blog memcached-operator]# go build [root@blog memcached-operator]# ll total 44788 drwx------ 8 root root 100 Mar 30 21:01 config -rw------- 1 root root 776 Mar 30 20:59 Dockerfile -rw------- 1 root root 162 Mar 30 21:01 go.mod -rw-r--r-- 1 root root 77000 Mar 30 21:01 go.sum drwx------ 2 root root 32 Mar 30 20:59 hack -rw------- 1 root root 2780 Mar 30 20:59 main.go -rw------- 1 root root 9449 Mar 30 21:01 Makefile -rwxr-xr-x 1 root root 45754092 Mar 30 21:02 memcached-operator -rw------- 1 root root 235 Mar 30 21:01 PROJECT目录结构中,还有一个 PROJECT 的文件,我们看看它里面有什么内容。

[root@blog memcached-operator]# cat PROJECT domain: example.com layout: - go.kubebuilder.io/v3 plugins: manifests.sdk.operatorframework.io/v2: {} scorecard.sdk.operatorframework.io/v2: {} projectName: memcached-operator repo: github.com/example/memcached-operator version: "3"它主要是一些我们工程的配置信息。

Manager(管理器)

Operator 的主代码 main.go 主要是初始化并运行 Manager(https://pkg.go.dev/sigs.k8s.io/controller-runtime/pkg/manager#Manager).有关管理器如何为自定义资源 API 定义注册 Scheme 以及设置和运行的更多详细信息,请参阅 Kubebuilder 入口文档(https://book.kubebuilder.io/cronjob-tutorial/empty-main.html) 控制器和 webhook。Manager 可以限制所有控制器监视资源的命名空间:

mgr, err := ctrl.NewManager(ctrl.GetConfigOrDie(), ctrl.Options{ Scheme: scheme, MetricsBindAddress: metricsAddr, Port: 9443, HealthProbeBindAddress: probeAddr, LeaderElection: enableLeaderElection, LeaderElectionID: "86f835c3.my.domain", })当然,我们也可以使用 MultiNamespacedCacheBuilder 来 watch 一组 namespace:

var namespaces []string // List of Namespaces mgr, err := ctrl.NewManager(ctrl.GetConfigOrDie(), ctrl.Options{ Scheme: scheme, NewCache: cache.MultiNamespacedCacheBuilder(namespaces), MetricsBindAddress: fmt.Sprintf("%s:%d", metricsHost, metricsPort), Port: 9443, HealthProbeBindAddress: probeAddr, LeaderElection: enableLeaderElection, LeaderElectionID: "86f835c3.my.domain", })对于更新详细的信息,我们可以阅读 MultiNamespacedCacheBuilder (https://pkg.go.dev/sigs.k8s.io/controller-runtime/pkg/cache?tab=doc#MultiNamespacedCacheBuilder)文档。

创建 API 及 Controller(控制器)

接下来使用 group 名为 cache, 版本为 v1alpha1 和 Kind 为 Memcached 创建一个新的自定义资源定义 (CRD) API。

[root@blog memcached-operator]# operator-sdk create api \ --group cache \ --version v1alpha1 \ --kind Memcached \ --resource \ --controller # 下面是上述命令的输出 Writing scaffold for you to edit... api/v1alpha1/memcached_types.go controllers/memcached_controller.go Update dependencies: $ go mod tidy Running make: $ make generate go: creating new go.mod: module tmp Downloading sigs.k8s.io/controller-tools/cmd/controller-gen@v0.7.0 # 下载了 controller-gen 文件 go: downloading sigs.k8s.io/controller-tools v0.7.0 go: downloading golang.org/x/tools v0.1.5 go: downloading k8s.io/apimachinery v0.22.2 go: downloading k8s.io/api v0.22.2 go: downloading k8s.io/apiextensions-apiserver v0.22.2 go: downloading github.com/inconshreveable/mousetrap v1.0.0 go: downloading golang.org/x/sys v0.0.0-20210616094352-59db8d763f22 go: downloading k8s.io/utils v0.0.0-20210819203725-bdf08cb9a70a go: downloading golang.org/x/mod v0.4.2 go get: added sigs.k8s.io/controller-tools v0.7.0 /root/memcached-operator/bin/controller-gen object:headerFile="hack/boilerplate.go.txt" paths="./..." Next: implement your new API and generate the manifests (e.g. CRDs,CRs) with: $ make manifests再次查看 PROJECT 文件:

[root@blog memcached-operator]# cat PROJECT domain: example.com layout: - go.kubebuilder.io/v3 plugins: manifests.sdk.operatorframework.io/v2: {} scorecard.sdk.operatorframework.io/v2: {} projectName: memcached-operator repo: github.com/example/memcached-operator resources: - api: crdVersion: v1 namespaced: true controller: true domain: example.com group: cache kind: Memcached path: github.com/example/memcached-operator/api/v1alpha1 version: v1alpha1 version: "3"上述的操作将会生成 Memcached resource API 文件,其文件位于 api/v1alpha1/memcached_types.go 及控制器文件位于 controllers/memcached_controller.go 文件中。

注意:本文只介绍了单组 API 的使用。如果我们想支持多组 API,请参考 Single Group to Multi-Group (https://book.kubebuilder.io/migration/multi-group.html)文档。

这个时候我们在看一下目录结构:

[root@blog memcached-operator]# tree -L 2 . ├── api │ └── v1alpha1 ├── bin │ └── controller-gen ├── config │ ├── crd │ ├── default │ ├── manager │ ├── manifests │ ├── prometheus │ ├── rbac │ ├── samples │ └── scorecard ├── controllers │ ├── memcached_controller.go │ └── suite_test.go ├── Dockerfile ├── go.mod ├── go.sum ├── hack │ └── boilerplate.go.txt ├── main.go ├── Makefile └── PROJECT 14 directories, 10 files理解 Kubernetes 的 APIs

有关 Kubernetes API 和 group-version-kind 模型的深入解读,我们可以查看这些 kubebuilder docs (https://book.kubebuilder.io/cronjob-tutorial/gvks.html)文档。一般来说,建议让一个控制器负责管理工程的每个 API,以遵循 controller-runtime (https://github.com/kubernetes-sigs/controller-runtime)设定的设计目标。

定义 API

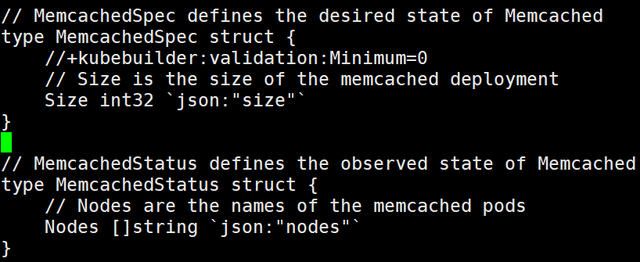

首先,我们将通过定义 “Memcached” 类型来表示我们的 API,该类型有一个 “MemcachedSpec.Size” 字段来设置要部署的 memcached 实例(CR)的数量,以及一个 “MemcachedStatus.Nodes” 字段来存储 CR 的 Pod 名称。

注意: 这里 Node 字段只是用于演示 Status 字段。在实际情况下,建议使用 Conditions (https://github.com/kubernetes/community/blob/master/contributors/devel/sig-architecture/api-conventions.md#typical-status-properties).

接下来修改 api/v1alpha1/memcached_types.go 中的 Go 类型定义,为 Memcached 自定义资源(CR)定义 API,使其具有以下规格和状态:

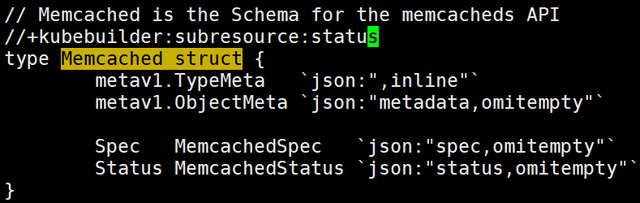

// MemcachedSpec defines the desired state of Memcachedtype MemcachedSpec struct { //+kubebuilder:validation:Minimum=0 // Size is the size of the memcached deploymentSize int32 `json:"size"` } // MemcachedStatus defines the observed state of Memcachedtype MemcachedStatus struct { // Nodes are the names of the memcached pods Nodes []string `json:"nodes"` }接下来添加 +kubebuilder:subresource:statusmarker(https://book.kubebuilder.io/reference/generating-crd.html#status) 以添加 status subresource (https://kubernetes.io/ docs/tasks/extend-kubernetes/custom-resources/custom-resource-definitions/#status-subresource)到 CRD 清单,以便控制器可以在不更改 CR 对象的其余部分的情况下更新 CR 状态:

// Memcached is the Schema for the memcacheds API //+kubebuilder:subresource:status // 增加此行type Memcached struct { metav1.TypeMeta `json:",inline"` metav1.ObjectMeta `json:"metadata,omitempty"` Spec MemcachedSpec `json:"spec,omitempty"` Status MemcachedStatus `json:"status,omitempty"` }修改 *_types.go 文件后,记得要运行以下命令来为该资源类型生成代码:

[root@blog memcached-operator]# make generate /root/memcached-operator/bin/controller-gen object:headerFile="hack/boilerplate.go.txt" paths="./..."上面的 makefile 的 generate 目标将调用 controller-gen(https://sigs.k8s.io/controller-tools)实用程序来更新 api/v1alpha1/zz_generated.deepcopy.go 文件以确保我们 API 的 Go 类型定义实现所有 Kind 类型必须实现的 runtime.Object 接口。

生成 CRD 清单

一旦使用 spec/status 字段和 CRD 验证标记定义 API 后,可以使用以下命令生成和更新 CRD 清单:

[root@blog memcached-operator]# make manifests /root/memcached-operator/bin/controller-gen rbac:roleName=manager-role crd webhook paths="./..." output:crd:artifacts:config=config/crd/bases这个 makefile 的 manifests 目标将调用 controller-gen 在 config/crd/bases/cache.example.com_memcacheds.yaml 文件中生成 CRD 清单。

验证 OpenAPI

CRD 中定义的 OpenAPI 验证可确保 CR 基于一组声明性规则进行验证。所有 CRD 都应该有验证。有关详细信息,请参阅 OpenAPI 验证(https://sdk.operatorframework.io/docs/building-operators/golang/references/openapi-validation) 文档。

实现 Controller

对于此示例,将生成的控制器文件 controllers/memcached_controller.go 替换为示例 memcached_controller.go 文件。其代码如下:

/* Copyright 2022. Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License. */package controllers import ( appsv1 "k8s.io/api/apps/v1" corev1 "k8s.io/api/core/v1" "k8s.io/apimachinery/pkg/api/errors" metav1 "k8s.io/apimachinery/pkg/apis/meta/v1" "k8s.io/apimachinery/pkg/types" "reflect" "time" "context" "k8s.io/apimachinery/pkg/runtime" ctrl "sigs.k8s.io/controller-runtime" "sigs.k8s.io/controller-runtime/pkg/client" ctrllog "sigs.k8s.io/controller-runtime/pkg/log" cachev1alpha1 "github.com/example/memcached-operator/api/v1alpha1" ) // MemcachedReconciler reconciles a Memcached object type MemcachedReconciler struct { client.Client Scheme *runtime.Scheme } //+kubebuilder:rbac:groups=cache.example.com,resources=memcacheds,verbs=get;list;watch;create;update;patch;delete //+kubebuilder:rbac:groups=cache.example.com,resources=memcacheds/status,verbs=get;update;patch //+kubebuilder:rbac:groups=cache.example.com,resources=memcacheds/finalizers,verbs=update //+kubebuilder:rbac:groups=apps,resources=deployments,verbs=get;list;watch;create;update;patch;delete //+kubebuilder:rbac:groups=core,resources=pods,verbs=get;list;watch // Reconcile is part of the main kubernetes reconciliation loop which aims to // move the current state of the cluster closer to the desired state. // TODO(user): Modify the Reconcile function to compare the state specified by // the Memcached object against the actual cluster state, and then // perform operations to make the cluster state reflect the state specified by // the user. // // For more details, check Reconcile and its Result here: // - https://pkg.go.dev/sigs.k8s.io/controller-runtime@v0.11.0/pkg/reconcile func (r *MemcachedReconciler) Reconcile(ctx context.Context, req ctrl.Request) (ctrl.Result, error) { log := ctrllog.FromContext(ctx) // Fetch the Memcached instance memcached := &cachev1alpha1.Memcached{} err := r.Get(ctx, req.NamespacedName, memcached) if err != nil { if errors.IsNotFound(err) { // Request object not found, could have been deleted after reconcile request. // Owned objects are automatically garbage collected. For additional cleanup logic use finalizers. // Return and don't requeue log.Info("Memcached resource not found. Ignoring since object must be deleted") return ctrl.Result{}, nil } // Error reading the object - requeue the request. log.Error(err, "Failed to get Memcached") return ctrl.Result{}, err } // Check if the deployment already exists, if not create a new one found := &appsv1.Deployment{} err = r.Get(ctx, types.NamespacedName{Name: memcached.Name, Namespace: memcached.Namespace}, found) if err != nil && errors.IsNotFound(err) { // Define a new deployment dep := r.deploymentForMemcached(memcached) log.Info("Creating a new Deployment", "Deployment.Namespace", dep.Namespace, "Deployment.Name", dep.Name) err = r.Create(ctx, dep) if err != nil { log.Error(err, "Failed to create new Deployment", "Deployment.Namespace", dep.Namespace, "Deployment.Name", dep.Name) return ctrl.Result{}, err } // Deployment created successfully - return and requeue return ctrl.Result{Requeue: true}, nil } else if err != nil { log.Error(err, "Failed to get Deployment") return ctrl.Result{}, err } // Ensure the deployment size is the same as the spec size := memcached.Spec.Size if *found.Spec.Replicas != size { found.Spec.Replicas = &size err = r.Update(ctx, found) if err != nil { log.Error(err, "Failed to update Deployment", "Deployment.Namespace", found.Namespace, "Deployment.Name", found.Name) return ctrl.Result{}, err } // Ask to requeue after 1 minute in order to give enough time for the // pods be created on the cluster side and the operand be able // to do the next update step accurately. return ctrl.Result{RequeueAfter: time.Minute}, nil } // Update the Memcached status with the pod names // List the pods for this memcached's deployment podList := &corev1.PodList{} listOpts := []client.ListOption{ client.InNamespace(memcached.Namespace), client.MatchingLabels(labelsForMemcached(memcached.Name)), } if err = r.List(ctx, podList, listOpts...); err != nil { log.Error(err, "Failed to list pods", "Memcached.Namespace", memcached.Namespace, "Memcached.Name", memcached.Name) return ctrl.Result{}, err } podNames := getPodNames(podList.Items) // Update status.Nodes if needed if !reflect.DeepEqual(podNames, memcached.Status.Nodes) { memcached.Status.Nodes = podNames err := r.Status().Update(ctx, memcached) if err != nil { log.Error(err, "Failed to update Memcached status") return ctrl.Result{}, err } } return ctrl.Result{}, nil } // deploymentForMemcached returns a memcached Deployment object func (r *MemcachedReconciler) deploymentForMemcached(m *cachev1alpha1.Memcached) *appsv1.Deployment { ls := labelsForMemcached(m.Name) replicas := m.Spec.Size dep := &appsv1.Deployment{ ObjectMeta: metav1.ObjectMeta{ Name: m.Name, Namespace: m.Namespace, }, Spec: appsv1.DeploymentSpec{ Replicas: &replicas, Selector: &metav1.LabelSelector{ MatchLabels: ls, }, Template: corev1.PodTemplateSpec{ ObjectMeta: metav1.ObjectMeta{ Labels: ls, }, Spec: corev1.PodSpec{ Containers: []corev1.Container{{ Image: "memcached:1.4.36-alpine", Name: "memcached", Command: []string{"memcached", "-m=64", "-o", "modern", "-v"}, Ports: []corev1.ContainerPort{{ ContainerPort: 11211, Name: "memcached", }}, }}, }, }, }, } // Set Memcached instance as the owner and controller ctrl.SetControllerReference(m, dep, r.Scheme) return dep } // labelsForMemcached returns the labels for selecting the resources // belonging to the given memcached CR name. func labelsForMemcached(name string) map[string]string { return map[string]string{"app": "memcached", "memcached_cr": name} } // getPodNames returns the pod names of the array of pods passed in func getPodNames(pods []corev1.Pod) []string { var podNames []string for _, pod := range pods { podNames = append(podNames, pod.Name) } return podNames } // SetupWithManager sets up the controller with the Manager. func (r *MemcachedReconciler) SetupWithManager(mgr ctrl.Manager) error { return ctrl.NewControllerManagedBy(mgr). For(&cachev1alpha1.Memcached{}). Owns(&appsv1.Deployment{}). Complete(r) }注意: 接下来的两个小节将解释控制器如何 watch 资源以及如何触发 reconcile 循环。如果想跳过此部分,可以查看此文档的《运行 Operator》章节,查看如何运行此 operator。

Controller watch 的资源

controllers/memcached_controller.go 中的 SetupWithManager() 函数指定了如何构建控制器以监视 CR 和该控制器拥有和管理的其他资源。

import ( ... appsv1"k8s.io/api/apps/v1" ... ) func (r *MemcachedReconciler) SetupWithManager(mgr ctrl.Manager) error { return ctrl.NewControllerManagedBy(mgr). For(&cachev1alpha1.Memcached{}). Owns(&appsv1.Deployment{}). Complete(r) }NewControllerManagedBy() 提供了一个控制器构建器,允许各种控制器的配置。

For(&cachev1alpha1.Memcached{}) 将 Memcached 类型指定为要监视的主要资源。对于每个 Memcached 类型的 Add/Update/Delete 事件,reconcile loop 将为该 Memcached 对象发送一个 reconcile Request(命名空间/key 名称)。

Owns(&appsv1.Deployment{}) 将 Deployments 类型指定为要 watch 的辅助资源。对于每个 Deployment 类型的添加/更新/删除事件,事件处理程序会将每个事件映射到部署所有者的 reconcile “请求”。在这种情况下,是为其创建 Deployment 的 Memcached 对象。

Controller 配置

在初始化控制器时可以进行许多其他有用的配置。有关这些配置的更多详细信息,可以查看上游 builder 和 controller 的帮助文档。

- 通过 MaxConcurrentReconciles 选项设置控制器的最大并发 Reconciles 数。默认为 1。

- func (r *MemcachedReconciler) SetupWithManager(mgr ctrl.Manager) error {

return ctrl.NewControllerManagedBy(mgr).

For(&cachev1alpha1.Memcached{}). Owns(&appsv1.Deployment{}). WithOptions(controller.Options{MaxConcurrentReconciles: 2}). Complete(r)} - 使用 predicates(https://sdk.operatorframework.io/docs/building-operators/golang/references/event-filtering/) 过滤监视事件。

- 选择 EventHandler (https://pkg.go.dev/sigs.k8s.io/controller-runtime/pkg/handler#hdr-EventHandlers)的类型以更改监视事件将如何转换为 reconcile 请求以进行 reconcile 循环。对于比主次资源更复杂的 operator 关系,可以使用 EnqueueRequestsFromMapFunc (https://pkg.go.dev/sigs.k8s.io/controller-runtime/pkg/handler#EnqueueRequestsFromMapFunc)处理程序以将监视事件转换为任意一组 reconcile 请求。

Reconcile loop

reconcile 函数负责在系统的实际状态上执行所需的 CR 状态。每次在监视的 CR 或资源上发生事件时,它都会运行,并将根据这些状态是否匹配并返回一些值。

这样,每个 Controller 都有一个 Reconciler 对象,该对象带有一个 Reconcile() 方法,用于实现 reconcile 循环。reconcile 循环传递了 Request(https://pkg.go.dev/sigs.k8s.io/controller-runtime/pkg/reconcile#Request)参数,该参数是用于查找缓存中的主要资源对象 Memcached:

import ( ctrl "sigs.k8s.io/controller-runtime" cachev1alpha1 "github.com/example/memcached-operator/api/v1alpha1" ... ) func (r *MemcachedReconciler) Reconcile(ctx context.Context, req ctrl.Request) (ctrl.Result, error) { // Lookup the Memcached instance for this reconcile request memcached := &cachev1alpha1.Memcached{} err := r.Get(ctx, req.NamespacedName, memcached) ... }有关 Reconcilers、客户端以及与资源事件交互的指南,可以参考 客户端 API (https://sdk.operatorframework.io/docs/building-operators/golang/references/client/)文档。以下是 Reconciler 的一些可能的返回选项:

- 发生错误时:

- return ctrl.Result{}, err

- 没有错误时:

- return ctrl.Result{Requeue: true}, nil

- 否则, 需要停止 Reconcile,如下:

- return ctrl.Result{}, nil

- 在 X 时间之后,再次 Reconcile:

- return ctrl.Result{RequeueAfter: nextRun.Sub(r.Now())}, nil

想要获取更多详细信息,检查 Reconcile 及其文档 Reconcile godoc(https://pkg.go.dev/sigs.k8s.io/controller-runtime/pkg/reconcile)。

指定权限及生成 RBAC 清单

controller 需要一定的 RBAC(https://kubernetes.io/docs/reference/access-authn-authz/rbac/) 权限与其管理的资源进行交互。这些是通过 RBAC 标记(https://book.kubebuilder.io/reference/markers/rbac.html) 指定的,如下代码所示:

//+kubebuilder:rbac:groups=cache.example.com,resources=memcacheds,verbs=get;list;watch;create;update;patch;delete //+kubebuilder:rbac:groups=cache.example.com,resources=memcacheds/status,verbs=get;update;patch //+kubebuilder:rbac:groups=cache.example.com,resources=memcacheds/finalizers,verbs=update //+kubebuilder:rbac:groups=apps,resources=deployments,verbs=get;list;watch;create;update;patch;delete //+kubebuilder:rbac:groups=core,resources=pods,verbs=get;list;func (r *MemcachedReconciler) Reconcile(ctx context.Context, req ctrl.Request) (ctrl.Result, error) { ... }The ClusterRole manifest at config/rbac/role.yaml is generated from the above markers via controller-gen with the following command:

[root@blog memcached-operator]# go mod tidy # 如果不执行这一步,执行下面的命令会报错 [root@blog memcached-operator]# make manifests /root/memcached-operator/bin/controller-gen rbac:roleName=manager-role crd webhook paths="./..." output:crd:artifacts:config=config/crd/bases配置 operator 镜像

现在万事俱备,只欠东风了。剩下的就是构建 operator 镜像并将其推送到指定的镜像仓库上面。

在构建操 operator 镜像之前,请确保生成的 Dockerfile 引用了我们想要的基础镜像。我们可以通过将其标签替换为另一个标签(例如 alpine:latest)并删除 USER 65532:65532 指令来更改默认的 “runner” 镜像 gcr.io/distroless/static:nonroot。我们没有删除这些指令,而是注释了它们。修改完成之后如下:

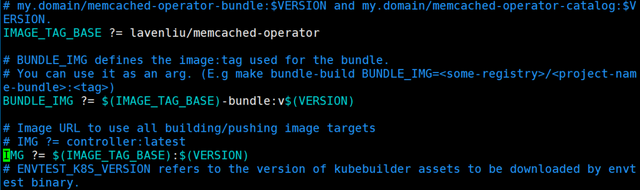

# Build the manager binary FROM golang:1.16 as builder WORKDIR /workspace # Copy the Go Modules manifests COPY go.mod go.mod COPY go.sum go.sum # cache deps before building and copying source so that we don't need to re-download as much# and so that source changes don't invalidate our downloaded layer # 修改了此行 RUN export GOPROXY=https://goproxy.cn && go mod download # Copy the go source COPY main.go main.go COPY api/ api/ COPY controllers/ controllers/ # Build RUN CGO_ENABLED=0 GOOS=linux GOARCH=amd64 go build -a -o manager main.go # Use distroless as minimal base image to package the manager binary # Refer to https://github.com/GoogleContainerTools/distroless for more details # 注释了此行,并新增了 FROm alpine:latest # FROM gcr.io/distroless/static:nonroot FROM alpine:latest WORKDIR / COPY --from=builder /workspace/manager . # 注释了此行 # USER 65532:65532 ENTRYPOINT ["/manager"]我们的 Makefile 由项目初始化时写入的值或命令行中的值组成镜像标签。特别是,IMAGE_TAG_BASE 变量允许我们为所有镜像标签定义一个通用的镜像仓库、命名空间和部分名称。如果当前值不正确,请将其更新到另一个镜像仓库或命名空间。之后,我们可以像下面这样更新 “IMG” 变量定义:

# 大概在 Makefile 文件的第 32 行,做如下修改,根据实际情况进行修改。 # 这里使用的是我自己在 Docker Hub 上的命名空间 lavenliu # 大家可以根据实际情况进行修改成自己的 IMAGE_TAG_BASE ?= lavenliu/memcached-operator # 大概在 Makefile 文件的第 40 行做如下操作 # IMG ?= controller:latest # 注释此行,并修改成如下的设置 IMG ?= $(IMAGE_TAG_BASE):$(VERSION)经过上述设置,我们就不用在命令行指定 IMG 环境变量了。下面的命令将会构建名为 lavenliu/memcached-operator 的镜像,标签为 v0.0.1,并推送到指定的仓库上面。

注意:在执行下面的命令之前,我们需要修改一下 Dockerfile,因为要在容器里面构建 Go 代码,所以我们需要设置以 Go 的代码,不然有些代码会拉取不成功。修改如下:

RUN go mod download 修改为 RUN export GOPROXY=https://goproxy.cn && go mod download

在执行下面的命令之前,确保我们已经登录 Docker Hub:

[root@blog memcached-operator]# docker login Login with your Docker ID to push and pull images from Docker Hub. If you don't have a Docker ID, head over to https://hub.docker.com to create one. Username: lavenliu Password: WARNING! Your password will be stored unencrypted in /root/.docker/config.json. Configure a credential helper to remove this warning. See https://docs.docker.com/engine/reference/commandline/login/#credentials-store Login Succeeded登录成功之后,在执行下面的命令:

[root@blog memcached-operator]# make docker-build docker-push /home/lcc/memcached-operator/bin/controller-gen rbac:roleName=manager-role crd webhook paths="./..." output:crd:artifacts:config=config/crd/bases /home/lcc/memcached-operator/bin/controller-gen object:headerFile="hack/boilerplate.go.txt" paths="./..." go fmt ./... go vet ./... KUBEBUILDER_ASSETS="/root/.local/share/kubebuilder-envtest/k8s/1.22.1-linux-amd64" go test ./... -coverprofile cover.out ? github.com/example/memcached-operator [no test files] ? github.com/example/memcached-operator/api/v1alpha1 [no test files] ok github.com/example/memcached-operator/controllers 8.846s coverage: 0.0% of statements docker build -t example.com/memcached-operator:0.0.1 . Sending build context to Docker daemon 174.1kB Step 1/13 : FROM golang:1.16 as builder 1.16: Pulling from library/golang ...... Status: Downloaded newer image for golang:1.16 ---> 8ffb179c0658 Step 2/13 : WORKDIR /workspace ---> Running in f8bfa670f96f Removing intermediate container f8bfa670f96f ---> 98c265863c39 Step 3/13 : COPY go.mod go.mod ---> ef86cd6e1e92 Step 4/13 : COPY go.sum go.sum ---> a43ba540fe13 Step 5/13 : RUN export GOPROXY=https://goproxy.cn && go mod download ...... Successfully built 5e9931cceaf9 Successfully tagged lavenliu/memcached-operator:0.0.1 docker push lavenliu/memcached-operator:0.0.1 The push refers to repository [docker.io/lavenliu/memcached-operator] ......查看本地镜像及远端镜像是否存在:

查看 Docker Hub 上的镜像是否存在:

如果执行成功,我们自定义的 Operator 镜像会被推送到我们指定的地方。

运行 Operator

我们将以下面两种形式运行 Operator:

- 以 Go 代码的形式在集群之外运行

- 以 Deployment 的形式在 Kubernetes 集群中运行

1、在本地(集群之外)运行

以下步骤将展示如何在集群上部署 operator。但是,要在本地运行以用于开发目的并在集群外部运行,请使用 makefile 的 target “make install run”。或者分开使用也行。如:先运行 make install,之后再运行 make run 也是可以的。先运行 make install:

[root@blog memcached-operator]# make install /root/memcached-operator/bin/controller-gen rbac:roleName=manager-role crd webhook paths="./..." output:crd:artifacts:config=config/crd/bases go: creating new go.mod: module tmp Downloading sigs.k8s.io/kustomize/kustomize/v3@v3.8.7 # 下载了 kustomize 文件 go: downloading sigs.k8s.io/kustomize/kustomize/v3 v3.8.7 ...... go: downloading golang.org/x/net v0.0.0-20200625001655-4c5254603344 ...... go get: added sigs.k8s.io/kustomize/kustomize/v3 v3.8.7 /root/memcached-operator/bin/kustomize build config/crd | kubectl apply -f - customresourcedefinition.apiextensions.k8s.io/memcacheds.cache.example.com created接着再运行 make run:

[root@blog memcached-operator]# make run /root/memcached-operator/bin/controller-gen rbac:roleName=manager-role crd webhook paths="./..." output:crd:artifacts:config=config/crd/bases /root/memcached-operator/bin/controller-gen object:headerFile="hack/boilerplate.go.txt" paths="./..." go fmt ./... go vet ./... go run ./main.go I0331 17:58:25.054157 4005942 request.go:665] Waited for 1.02972392s due to client-side throttling, not priority and fairness, request: GET:https://192.168.56.101:6443/apis/admissionregistration.k8s.io/v1?timeout=32s 2022-03-31T17:58:25.605+0800INFOcontroller-runtime.metricsmetrics server is starting to listen{"addr": ":8080"} 2022-03-31T17:58:25.605+0800INFOsetupstarting manager 2022-03-31T17:58:25.605+0800INFOstarting metrics server{"path": "/metrics"} 2022-03-31T17:58:25.605+0800INFOcontroller.memcachedStarting EventSource{"reconciler group": "cache.example.com", "reconciler kind": "Memcached", "source": "kind source: /, Kind="} 2022-03-31T17:58:25.605+0800INFOcontroller.memcachedStarting EventSource{"reconciler group": "cache.example.com", "reconciler kind": "Memcached", "source": "kind source: /, Kind="} 2022-03-31T17:58:25.605+0800INFOcontroller.memcachedStarting Controller{"reconciler group": "cache.example.com", "reconciler kind": "Memcached"} 2022-03-31T17:58:25.707+0800INFOcontroller.memcachedStarting workers{"reconciler group": "cache.example.com", "reconciler kind": "Memcached", "worker count": 1}查看 CRD:

[root@blog memcached-operator]# kubectl get crd |grep mem memcacheds.cache.example.com 2022-03-31T09:57:16Z创建一个 Memcached 测试 CR

更新 Memcached CR 的配置文件 config/samples/cache_v1alpha1_memcached.yaml 并定义如下配置:

# 之前的样板文件 [root@blog memcached-operator]# cat config/samples/cache_v1alpha1_memcached.yaml apiVersion: cache.my.domain/v1alpha1 kind: Memcached metadata: name: memcached-sample spec: # TODO(user): Add fields here# 修改之后的配置文件 [root@blog memcached-operator]# cat config/samples/cache_v1alpha1_memcached.yaml apiVersion: cache.example.com/v1alpha1 kind: Memcached metadata:name: memcached-sample spec: size: 3创建上述 CR:

# 没有创建之前的 pod 情况 [root@blog memcached-operator]# kubectl -n liucc-test get deployment NAME READY UP-TO-DATE AVAILABLE AGE hello-spring-svc-deployment 1/1 1 1 79d json-spring-svc-deployment 1/1 1 1 72d memcached-sample 3/3 3 3 25s world-spring-svc-deployment 1/1 1 1 78d [root@blog memcached-operator]# kubectl -n liucc-test apply -f config/samples/cache_v1alpha1_memcached.yaml memcached.cache.example.com/memcached-sample created确保 memcached operator 是否创建了相应的 deployment 及相应的数量。再次执行检查的命令:

[root@blog memcached-operator]# kubectl -n liucc-test get pods NAME READY STATUS RESTARTS AGE hello-spring-svc-deployment-694b8cb9d4-4sjl5 1/1 Running 0 78d json-spring-svc-deployment-cf88f85c8-rsqdc 1/1 Running 0 71d memcached-sample-6c765df685-dtmqg 1/1 Running 0 51s memcached-sample-6c765df685-nl8ks 1/1 Running 0 51s memcached-sample-6c765df685-vpmfq 1/1 Running 0 51s world-spring-svc-deployment-84b78bc8d4-tvdz8 1/1 Running 0 78d [root@blog memcached-operator]# kubectl -n liucc-test get memcached/memcached-sample -o yaml apiVersion: cache.example.com/v1alpha1 kind: Memcached metadata: annotations: kubectl.kubernetes.io/last-applied-configuration: | {"apiVersion":"cache.example.com/v1alpha1","kind":"Memcached","metadata":{"annotations":{},"name":"memcached-sample","namespace":"liucc-test"},"spec":{"size":3}} creationTimestamp: "2022-03-31T10:26:00Z" generation: 1 managedFields: - apiVersion: cache.example.com/v1alpha1 fieldsType: FieldsV1 fieldsV1: f:metadata: f:annotations: .: {} f:kubectl.kubernetes.io/last-applied-configuration: {} f:spec: .: {} f:size: {} manager: kubectl-client-side-apply operation: Update time: "2022-03-31T10:26:00Z" - apiVersion: cache.example.com/v1alpha1 fieldsType: FieldsV1 fieldsV1: f:status: .: {} f:nodes: {} manager: main operation: Update time: "2022-03-31T10:26:01Z" name: memcached-sample namespace: liucc-test resourceVersion: "59357587" uid: ef2efd68-c31b-4a9e-8795-6d61975e48dd spec: size: 3 status: nodes: - memcached-sample-6c765df685-vpmfq - memcached-sample-6c765df685-dtmqg - memcached-sample-6c765df685-nl8ks更新 pod 数量

接下来更新 config/samples/cache_v1alpha1_memcached.yaml 文件中的 spec.size,由 3 改为 5,并进行验证:

[root@blog memcached-operator]# kubectl -n liucc-test patch memcached memcached-sample -p '{"spec":{"size": 5}}' --type=merge memcached.cache.example.com/memcached-sample patched [root@blog memcached-operator]# kubectl -n liucc-test get po NAME READY STATUS RESTARTS AGE hello-spring-svc-deployment-694b8cb9d4-4sjl5 1/1 Running 0 78d json-spring-svc-deployment-cf88f85c8-rsqdc 1/1 Running 0 71d memcached-sample-6c765df685-65wc8 1/1 Running 0 90s memcached-sample-6c765df685-7r45t 1/1 Running 0 90s memcached-sample-6c765df685-llhz2 1/1 Running 0 90s memcached-sample-6c765df685-n6wsk 1/1 Running 0 5s memcached-sample-6c765df685-nwkjv 1/1 Running 0 5s world-spring-svc-deployment-84b78bc8d4-tvdz8 1/1 Running 0 78d再次查看 deployment:

[root@blog memcached-operator]# kubectl -n liucc-test get deployment NAME READY UP-TO-DATE AVAILABLE AGE hello-spring-svc-deployment 1/1 1 1 79d json-spring-svc-deployment 1/1 1 1 72d memcached-sample 5/5 5 5 2m27s world-spring-svc-deployment 1/1 1 1 78d清理环境

我们可以运行下面的命令,清理已经部署的资源:

[root@blog memcached-operator]# kubectl -n liucc-test delete -f config/samples/cache_v1alpha1_memcached.yaml memcached.cache.example.com "memcached-sample" deleted # 验证 deployment 是否被删除 [root@blog memcached-operator]# kubectl -n liucc-test get deployment NAME READY UP-TO-DATE AVAILABLE AGE hello-spring-svc-deployment 1/1 1 1 79d json-spring-svc-deployment 1/1 1 1 72d world-spring-svc-deployment 1/1 1 1 78d # 验证 pod 是否被删除 [root@blog memcached-operator]# kubectl -n liucc-test get po NAME READY STATUS RESTARTS AGE hello-spring-svc-deployment-694b8cb9d4-4sjl5 1/1 Running 0 78d json-spring-svc-deployment-cf88f85c8-rsqdc 1/1 Running 0 71d memcached-sample-6c765df685-dtmqg 0/1 Terminating 0 3m5s memcached-sample-6c765df685-vmp6q 0/1 Terminating 0 60s world-spring-svc-deployment-84b78bc8d4-tvdz8 1/1 Running 0 78d2、在集群内部以 Deployment 运行

默认情况下,会在 Kubernetes 集群上创建一个名为 <project-name>-system 的新命名空间,例如:memcached-operator-system。运行以下命令部署 operator,它还会从 config/rbac 清单文件安装 RBAC。

# 查看 make 帮助信息 [root@blog memcached-operator]# make help Usage: make <target> General help Display this help. Development manifests Generate WebhookConfiguration, ClusterRole and CustomResourceDefinition objects. generate Generate code containing DeepCopy, DeepCopyInto, and DeepCopyObject method implementations. fmt Run go fmt against code. vet Run go vet against code. test Run tests. Build build Build manager binary. run Run a controller from your host. docker-build Build docker image with the manager. docker-push Push docker image with the manager. Deployment install Install CRDs into the K8s cluster specified in ~/.kube/config. uninstall Uninstall CRDs from the K8s cluster specified in ~/.kube/config. Call with ignore-not-found=true to ignore resource not found errors during deletion. deploy Deploy controller to the K8s cluster specified in ~/.kube/config. undeploy Undeploy controller from the K8s cluster specified in ~/.kube/config. Call with ignore-not-found=true to ignore resource not found errors during deletion. controller-gen Download controller-gen locally if necessary. kustomize Download kustomize locally if necessary. envtest Download envtest-setup locally if necessary. bundle Generate bundle manifests and metadata, then validate generated files. bundle-build Build the bundle image. bundle-push Push the bundle image. opm Download opm locally if necessary. catalog-build Build a catalog image. catalog-push Push a catalog image.在部署之前,我们需要修改一下 Makefile 文件,修改镜像的地址:

# 大概在 33 行 IMAGE_TAG_BASE ?= <修改为一个有效的地址>/memcached-operator接着进行部署:

[root@blog memcached-operator]# make deploy /root/memcached-operator/bin/controller-gen rbac:roleName=manager-role crd webhook paths="./..." output:crd:artifacts:config=config/crd/bases cd config/manager && /root/memcached-operator/bin/kustomize edit set image controller=lavenliu/memcached-operator:0.0.1 /root/memcached-operator/bin/kustomize build config/default | kubectl apply -f - namespace/memcached-operator-system created customresourcedefinition.apiextensions.k8s.io/memcacheds.cache.example.com configured serviceaccount/memcached-operator-controller-manager created role.rbac.authorization.k8s.io/memcached-operator-leader-election-role created clusterrole.rbac.authorization.k8s.io/memcached-operator-manager-role created clusterrole.rbac.authorization.k8s.io/memcached-operator-metrics-reader created clusterrole.rbac.authorization.k8s.io/memcached-operator-proxy-role created rolebinding.rbac.authorization.k8s.io/memcached-operator-leader-election-rolebinding created clusterrolebinding.rbac.authorization.k8s.io/memcached-operator-manager-rolebinding created clusterrolebinding.rbac.authorization.k8s.io/memcached-operator-proxy-rolebinding created configmap/memcached-operator-manager-config created service/memcached-operator-controller-manager-metrics-service created deployment.apps/memcached-operator-controller-manager created [root@blog memcached-operator]# echo $? 0验证 memcached-operator 是否启动成功:

[root@blog memcached-operator]# kubectl -n memcached-operator-system get deployment NAME READY UP-TO-DATE AVAILABLE AGE memcached-operator-controller-manager 0/1 1 0 50s [root@blog memcached-operator]# kubectl -n memcached-operator-system get po NAME READY STATUS RESTARTS AGE memcached-operator-controller-manager-7bbc46698f-wsqvp 0/2 ContainerCreating 0 50s报错了,主要原因是拉取镜像失败及运行容器时也失败了。查看一下错误原因:

[root@blog memcached-operator]# kubectl -n memcached-operator-system describe po memcached-operator-controller-manager-7bbc46698f-wsqvp ...... Events: Type Reason Age From Message ---- ------ ---- ---- ------- Normal Scheduled 59s default-scheduler Successfully assigned memcached-operator-system/memcached-operator-controller-manager-7bbc46698f-wsqvp to node03.lavenliu.cn Normal Pulling <invalid> kubelet Pulling image "gcr.io/kubebuilder/kube-rbac-proxy:v0.8.0" Normal Pulled <invalid> kubelet Successfully pulled image "gcr.io/kubebuilder/kube-rbac-proxy:v0.8.0" in 15.593866868s Normal Created <invalid> kubelet Created container kube-rbac-proxy Normal Started <invalid> kubelet Started container kube-rbac-proxy Normal Pulling <invalid> kubelet Pulling image "lavenliu/memcached-operator:0.0.1" Normal Pulled <invalid> kubelet Successfully pulled image "lavenliu/memcached-operator:0.0.1" in 19.848521963s Warning Failed <invalid> (x4 over <invalid>) kubelet Error: container has runAsNonRoot and image will run as root (pod: "memcached-operator-controller-manager-7bbc46698f-wsqvp_memcached-operator-system(20bd3c70-7400-4497-904e-325122b364db)", container: manager) Normal Pulled <invalid> (x3 over <invalid>) kubelet Container image "lavenliu/memcached-operator:0.0.1" already present on machine文件做如下修改:

[root@blog memcached-operator]# vim config/default/manager_auth_proxy_patch.yaml spec: template: spec: securityContext: # 新增此行 runAsUser: 1000 # 新增此行 containers: - name: kube-rbac-proxy # image: gcr.io/kubebuilder/kube-rbac-proxy:v0.8.0 # 镜像拉取不成功,所以注释了此行 # 在 Docker HUB 上面找到了如下的镜像可以正常使用 image: rancher/kube-rbac-proxy:v0.5.0 # 新增此行修改完成之后,执行 make undeloy 命令:

[root@blog memcached-operator]# make undeploy然后再次执行 make deploy 命令:

[root@blog memcached-operator]# make deploy最后查看 deployment 及 pod 信息:

[root@blog memcached-operator]# kubectl -n memcached-operator-system get deployment NAME READY UP-TO-DATE AVAILABLE AGE memcached-operator-controller-manager 1/1 1 1 4m [root@blog memcached-operator]# kubectl -n memcached-operator-system get po NAME READY STATUS RESTARTS AGE memcached-operator-controller-manager-54548dbf4d-drnhp 2/2 Running 0 4m15s [root@blog memcached-operator]# kubectl -n memcached-operator-system describe po memcached-operator-controller-manager-54548dbf4d-drnhp ...... Events: Type Reason Age From Message ---- ------ ---- ---- ------- Normal Scheduled 4m26s default-scheduler Successfully assigned memcached-operator-system/memcached-operator-controller-manager-54548dbf4d-drnhp to cn-shanghai.10.10.11.13 Normal AllocIPSucceed 4m26s terway-daemon Alloc IP 10.10.108.242/32 for Pod Normal Pulled 4m26s kubelet Container image "rancher/kube-rbac-proxy:v0.5.0" already present on machine Normal Created 4m26s kubelet Created container kube-rbac-proxy Normal Started 4m26s kubelet Started container kube-rbac-proxy Normal Pulled 4m26s kubelet Container image "harbor.lavenliu.cn/library/memcached-operator:0.0.1" already present on machine Normal Created 4m26s kubelet Created container manager Normal Started 4m26s kubelet Started container manager控制器部署完成,接着就部署实例:

[root@blog memcached-operator]# kubectl -n liucc-test apply -f config/samples/cache_v1alpha1_memcached.yaml查看 pod 信息:

[root@blog memcached-operator]# kubectl -n liucc-test get po NAME READY STATUS RESTARTS AGE hello-spring-svc-deployment-694b8cb9d4-4sjl5 1/1 Running 0 78d json-spring-svc-deployment-cf88f85c8-rsqdc 1/1 Running 0 71d memcached-sample-6c765df685-7xrn2 1/1 Running 0 6m15s memcached-sample-6c765df685-tdjz8 1/1 Running 0 6m15s memcached-sample-6c765df685-xvpqk 1/1 Running 0 6m15s world-spring-svc-deployment-84b78bc8d4-tvdz8 1/1 Running 0 78d修改 pod 的数量为 5 个:

[root@blog memcached-operator]# kubectl -n liucc-test patch memcached memcached-sample -p '{"spec":{"size": 5}}' --type=merge memcached.cache.example.com/memcached-sample patched # 再次查看 [root@blog memcached-operator]# kubectl -n liucc-test get po NAME READY STATUS RESTARTS AGE hello-spring-svc-deployment-694b8cb9d4-4sjl5 1/1 Running 0 78d json-spring-svc-deployment-cf88f85c8-rsqdc 1/1 Running 0 71d memcached-sample-6c765df685-7xrn2 1/1 Running 0 7m27s memcached-sample-6c765df685-tdjz8 1/1 Running 0 7m27s memcached-sample-6c765df685-xvpqk 1/1 Running 0 7m27s memcached-sample-6c765df685-b79kc 1/1 Running 0 3s # 新起的 pod memcached-sample-6c765df685-qkxvc 1/1 Running 0 3s # 新起的 pod world-spring-svc-deployment-84b78bc8d4-tvdz8 1/1 Running 0 78d清除演示环境:

[root@blog memcached-operator]# make undeploy /root/memcached-operator/bin/kustomize build config/default | kubectl delete --ignore-not-found=false -f - namespace "memcached-operator-system" deleted customresourcedefinition.apiextensions.k8s.io "memcacheds.cache.example.com" deleted serviceaccount "memcached-operator-controller-manager" deleted role.rbac.authorization.k8s.io "memcached-operator-leader-election-role" deleted clusterrole.rbac.authorization.k8s.io "memcached-operator-manager-role" deleted clusterrole.rbac.authorization.k8s.io "memcached-operator-metrics-reader" deleted clusterrole.rbac.authorization.k8s.io "memcached-operator-proxy-role" deleted rolebinding.rbac.authorization.k8s.io "memcached-operator-leader-election-rolebinding" deleted clusterrolebinding.rbac.authorization.k8s.io "memcached-operator-manager-rolebinding" deleted clusterrolebinding.rbac.authorization.k8s.io "memcached-operator-proxy-rolebinding" deleted configmap "memcached-operator-manager-config" deleted service "memcached-operator-controller-manager-metrics-service" deleted deployment.apps "memcached-operator-controller-manager" deleted验证 memcached 的 pod 是否还在:

[root@blog memcached-operator]# kubectl -n liucc-test get po NAME READY STATUS RESTARTS AGE hello-spring-svc-deployment-694b8cb9d4-4sjl5 1/1 Running 0 78d json-spring-svc-deployment-cf88f85c8-rsqdc 1/1 Running 0 71d world-spring-svc-deployment-84b78bc8d4-tvdz8 1/1 Running 0 78d3、使用 OLM 部署 Operator

首先我们需要安装 OLM:

[root@blog memcached-operator]# operator-sdk olm install I0403 20:06:37.667540 1957628 request.go:665] Waited for 1.028361355s due to client-side throttling, not priority and fairness, request: GET:https://192.168.56.101:6443/apis/admissionregistration.k8s.io/v1beta1?timeout=32s INFO[0001] Fetching CRDs for version "latest" INFO[0001] Fetching resources for resolved version "latest" I0403 20:07:02.327494 1957628 request.go:665] Waited for 1.04307318s due to client-side throttling, not priority and fairness, request: GET:https://192.168.56.101:6443/apis/log.alibabacloud.com/v1alpha1?timeout=32s I0403 20:07:13.324530 1957628 request.go:665] Waited for 1.045662694s due to client-side throttling, not priority and fairness, request: GET:https://192.168.56.101:6443/apis/install.istio.io/v1alpha1?timeout=32s INFO[0038] Creating CRDs and resources INFO[0038] Creating CustomResourceDefinition "catalogsources.operators.coreos.com" INFO[0038] Creating CustomResourceDefinition "clusterserviceversions.operators.coreos.com" INFO[0038] Creating CustomResourceDefinition "installplans.operators.coreos.com" INFO[0038] Creating CustomResourceDefinition "olmconfigs.operators.coreos.com" INFO[0038] Creating CustomResourceDefinition "operatorconditions.operators.coreos.com" INFO[0038] Creating CustomResourceDefinition "operatorgroups.operators.coreos.com" INFO[0038] Creating CustomResourceDefinition "operators.operators.coreos.com" INFO[0038] Creating CustomResourceDefinition "subscriptions.operators.coreos.com" INFO[0038] Creating Namespace "olm" INFO[0038] Creating Namespace "operators" INFO[0039] Creating ServiceAccount "olm/olm-operator-serviceaccount" INFO[0039] Creating ClusterRole "system:controller:operator-lifecycle-manager" INFO[0039] Creating ClusterRoleBinding "olm-operator-binding-olm" INFO[0039] Creating OLMConfig "cluster" INFO[0042] Creating Deployment "olm/olm-operator" INFO[0042] Creating Deployment "olm/catalog-operator" INFO[0042] Creating ClusterRole "aggregate-olm-edit" INFO[0042] Creating ClusterRole "aggregate-olm-view" INFO[0042] Creating OperatorGroup "operators/global-operators" INFO[0042] Creating OperatorGroup "olm/olm-operators" INFO[0042] Creating ClusterServiceVersion "olm/packageserver" INFO[0042] Creating CatalogSource "olm/operatorhubio-catalog" INFO[0042] Waiting for deployment/olm-operator rollout to complete INFO[0042] Waiting for Deployment "olm/olm-operator" to rollout: 0 of 1 updated replicas are available INFO[0063] Deployment "olm/olm-operator" successfully rolled out INFO[0063] Waiting for deployment/catalog-operator rollout to complete INFO[0063] Deployment "olm/catalog-operator" successfully rolled out INFO[0063] Waiting for deployment/packageserver rollout to complete INFO[0063] Waiting for Deployment "olm/packageserver" to rollout: 0 of 2 updated replicas are available INFO[0079] Deployment "olm/packageserver" successfully rolled out INFO[0079] Successfully installed OLM version "latest" NAME NAMESPACE KIND STATUS catalogsources.operators.coreos.com CustomResourceDefinition Installed clusterserviceversions.operators.coreos.com CustomResourceDefinition Installed installplans.operators.coreos.com CustomResourceDefinition Installed olmconfigs.operators.coreos.com CustomResourceDefinition Installed operatorconditions.operators.coreos.com CustomResourceDefinition Installed operatorgroups.operators.coreos.com CustomResourceDefinition Installed operators.operators.coreos.com CustomResourceDefinition Installed subscriptions.operators.coreos.com CustomResourceDefinition Installed olm Namespace Installed operators Namespace Installed olm-operator-serviceaccount olm ServiceAccount Installed system:controller:operator-lifecycle-manager ClusterRole Installed olm-operator-binding-olm ClusterRoleBinding Installed cluster OLMConfig Installed olm-operator olm Deployment Installed catalog-operator olm Deployment Installed aggregate-olm-edit ClusterRole Installed aggregate-olm-view ClusterRole Installed global-operators operators OperatorGroup Installed olm-operators olm OperatorGroup Installed packageserver olm ClusterServiceVersion Installed operatorhubio-catalog olm CatalogSource Installed如果安装失败,再次进行安装时,需要先卸载,然后再安装:operator-sdk olm uninstall。

安装完成可以查看一下状态:

[root@blog memcached-operator]# operator-sdk olm status I0406 11:28:17.359874 30074 request.go:665] Waited for 1.041471802s due to client-side throttling, not priority and fairness, request: GET:https://192.168.56.101:6443/apis/admissionregistration.k8s.io/v1?timeout=32s INFO[0002] Fetching CRDs for version "v0.20.0" INFO[0002] Fetching resources for resolved version "v0.20.0" INFO[0007] Successfully got OLM status for version "v0.20.0" NAME NAMESPACE KIND STATUS operatorgroups.operators.coreos.com CustomResourceDefinition Installed operatorconditions.operators.coreos.com CustomResourceDefinition Installed olmconfigs.operators.coreos.com CustomResourceDefinition Installed installplans.operators.coreos.com CustomResourceDefinition Installed clusterserviceversions.operators.coreos.com CustomResourceDefinition Installed olm-operator-binding-olm ClusterRoleBinding Installed operatorhubio-catalog olm CatalogSource Installed olm-operators olm OperatorGroup Installed aggregate-olm-view ClusterRole Installed catalog-operator olm Deployment Installed cluster OLMConfig Installed operators.operators.coreos.com CustomResourceDefinition Installed olm-operator olm Deployment Installed subscriptions.operators.coreos.com CustomResourceDefinition Installed aggregate-olm-edit ClusterRole Installed olm Namespace Installed global-operators operators OperatorGroup Installed operators Namespace Installed packageserver olm ClusterServiceVersion Installed olm-operator-serviceaccount olm ServiceAccount Installed catalogsources.operators.coreos.com CustomResourceDefinition Installed system:controller:operator-lifecycle-manager ClusterRole Installed我们看一下中间生成了哪些命名空间及 POD:

[root@blog memcached-operator]# kubectl get ns NAME STATUS AGE default Active 185d kube-node-lease Active 185d kube-public Active 185d kube-system Active 185d liucc-test Active 7h43m olm Active 103m operators Active 103m [root@blog memcached-operator]# kubectl -n olm get po NAME READY STATUS RESTARTS AGE catalog-operator-5c4997c789-tk5cq 1/1 Running 0 103m olm-operator-6d46969488-rc8zf 1/1 Running 0 103m operatorhubio-catalog-nt5vw 1/1 Running 0 103m packageserver-848bdb76dd-6snj4 1/1 Running 0 103m packageserver-848bdb76dd-grdx4 1/1 Running 0 103m接下来对我们的 Operator 进行打包,然后构建并推送包镜像。bundle 目标在 bundle 目录包含定义了我们的 operator 清单和元数据。bundle-build 和 bundle-push 两个目标将会构建和推送由 bundle.Dockerfile 文件定义的包镜像。

[root@blog memcached-operator]# make bundle /root/memcached-operator/bin/controller-gen rbac:roleName=manager-role crd webhook paths="./..." output:crd:artifacts:config=config/crd/bases operator-sdk generate kustomize manifests -q Display name for the operator (required): # 需要填写如下信息 > memcached-operator Description for the operator (required): # 需要填写如下信息 > memcached-operator Provider's name for the operator (required): # 需要填写如下信息 > lavenliu Any relevant URL for the provider name (optional): > Comma-separated list of keywords for your operator (required): # 需要填写如下信息 > memcached,operator Comma-separated list of maintainers and their emails (e.g. 'name1:email1, name2:email2') (required): # 需要填写如下信息 > lcc@163.com cd config/manager && /root/memcached-operator/bin/kustomize edit set image controller=lavenliu/memcached-operator:0.0.1 /root/memcached-operator/bin/kustomize build config/manifests | operator-sdk generate bundle -q --overwrite --version 0.0.1 INFO[0000] Creating bundle/metadata/annotations.yaml INFO[0000] Creating bundle.Dockerfile INFO[0000] Bundle metadata generated suceessfully operator-sdk bundle validate ./bundle INFO[0000] All validation tests have completed successfully我们看看是否有新的文件或目录产生:

[root@blog memcached-operator]# ll -t total 136 drwxr-xr-x 5 root root 4096 Apr 3 20:11 bundle # 新产生的目录-rw-r--r-- 1 root root 923 Apr 3 20:11 bundle.Dockerfile # 新产生的文件 drwx------ 2 root root 4096 Apr 3 19:15 hack drwxr-xr-x 2 root root 4096 Apr 3 15:41 bin drwx------ 2 root root 4096 Apr 3 15:37 controllers -rw------- 1 root root 9560 Mar 31 19:03 Makefile -rw-r--r-- 1 root root 2361 Mar 31 16:49 cover.out -rw------- 1 root root 780 Mar 31 16:46 Dockerfile -rw------- 1 root root 246 Mar 31 16:20 go.mod -rw------- 1 root root 3192 Mar 31 14:39 main.go -rw------- 1 root root 448 Mar 31 14:39 PROJECT drwx------ 3 root root 4096 Mar 31 14:39 api drwx------ 10 root root 4096 Mar 31 14:39 config -rw-r--r-- 1 root root 77000 Mar 31 14:37 go.sum接着运行 make bundle-build 目标:

[root@blog memcached-operator]# make bundle-build docker build -f bundle.Dockerfile -t lavenliu/memcached-operator-bundle:v0.0.1 . Sending build context to Docker daemon 196.6kB Step 1/14 : FROM scratch ---> Step 2/14 : LABEL operators.operatorframework.io.bundle.mediatype.v1=registry+v1 ---> Running in acbee848ff30 Removing intermediate container acbee848ff30 ---> 7840efc1acc7 Step 3/14 : LABEL operators.operatorframework.io.bundle.manifests.v1=manifests/ ---> Running in 795bbd68aa0b Removing intermediate container 795bbd68aa0b ---> 58c64b2c5bed Step 4/14 : LABEL operators.operatorframework.io.bundle.metadata.v1=metadata/ ---> Running in 3ba74dd41232 Removing intermediate container 3ba74dd41232 ---> 4921148bcd53 Step 5/14 : LABEL operators.operatorframework.io.bundle.package.v1=memcached-operator ---> Running in c8ae15420ea2 Removing intermediate container c8ae15420ea2 ---> 0c417435ceff Step 6/14 : LABEL operators.operatorframework.io.bundle.channels.v1=alpha ---> Running in a4c7d20e793b Removing intermediate container a4c7d20e793b ---> b549d7f0aa94 Step 7/14 : LABEL operators.operatorframework.io.metrics.builder=operator-sdk-v1.15.0+git ---> Running in 3dd418069c6b Removing intermediate container 3dd418069c6b ---> a0ead127d313 Step 8/14 : LABEL operators.operatorframework.io.metrics.mediatype.v1=metrics+v1 ---> Running in 513a0223cafb Removing intermediate container 513a0223cafb ---> 97c961869eb3 Step 9/14 : LABEL operators.operatorframework.io.metrics.project_layout=go.kubebuilder.io/v3 ---> Running in 24c6fbf30e77 Removing intermediate container 24c6fbf30e77 ---> e523f8b86a47 Step 10/14 : LABEL operators.operatorframework.io.test.mediatype.v1=scorecard+v1 ---> Running in 795730b93b89 Removing intermediate container 795730b93b89 ---> 5f186fccf6fb Step 11/14 : LABEL operators.operatorframework.io.test.config.v1=tests/scorecard/ ---> Running in d29664ae092a Removing intermediate container d29664ae092a ---> 7776ff18f767 Step 12/14 : COPY bundle/manifests /manifests/ ---> cde9d176b798 Step 13/14 : COPY bundle/metadata /metadata/ ---> 38d589cdd086 Step 14/14 : COPY bundle/tests/scorecard /tests/scorecard/ ---> 976c6344511b Successfully built 976c6344511b Successfully tagged lavenliu/memcached-operator-bundle:v0.0.1再运行 make bundle-push 目标:

[root@blog memcached-operator]# make bundle-pushmake docker-push IMG=lavenliu/memcached-operator-bundle:v0.0.1 make[1]: Entering directory `/root/memcached-operator' docker push lavenliu/memcached-operator-bundle:v0.0.1 The push refers to repository [docker.io/lavenliu/memcached-operator-bundle] 36632daec064: Layer already exists ca08711083d4: Layer already exists 8b7611a97ff6: Layer already exists v0.0.1: digest: sha256:9adbb5b9e2aede9108f9bba509dc8ca9aa0ed4aad0de6ad37cc8cb4eaa3b6c79 size: 939 make[1]: Leaving directory `/root/memcached-operator'最后,运行我们的包。如果我们的包镜像托管在私有镜像仓库中或具有自定义 CA,则可以参考这些 配置步骤 (https://sdk.operatorframework.io/docs/olm-integration/cli-overview#private-bundle- and-catalog-image-registries)。

[root@blog memcached-operator]# operator-sdk run bundle lavenliu/memcached-operator-bundle:v0.0.1查看 docs(https://sdk.operatorframework.io/docs/olm-integration/tutorial-bundle) 深入了解 operator-sdk 的 OLM 集成。

总结

经过前面几章的 “折腾”,我们终于完成了一个 Operator 的 tutorial。虽然是按照官方文档进行一步一步的操作,但是中间过程还是挺曲折的。希望本文可以帮助到大家,对编写 Operator 起到参考的作用。

附录

推荐阅读

- Introduction – The Kubebuilder Book( https://book.kubebuilder.io/introduction.html)

- Operator SDK (operatorframework.io)(https://sdk.operatorframework.io/)

- 《Kubernetes Operators》,这本书使用的 operator-sdk 版本比较旧,但是里面的讲解还是非常不错的

- CoreOS 关于 Operator 的介绍

- https://kubernetes.io/zh/docs/concepts/extend-kubernetes/operator/

- 在 OperatorHub.io(https://operatorhub.io/) 上找到现成的、适合你的 Operator

云和安全管理服务商